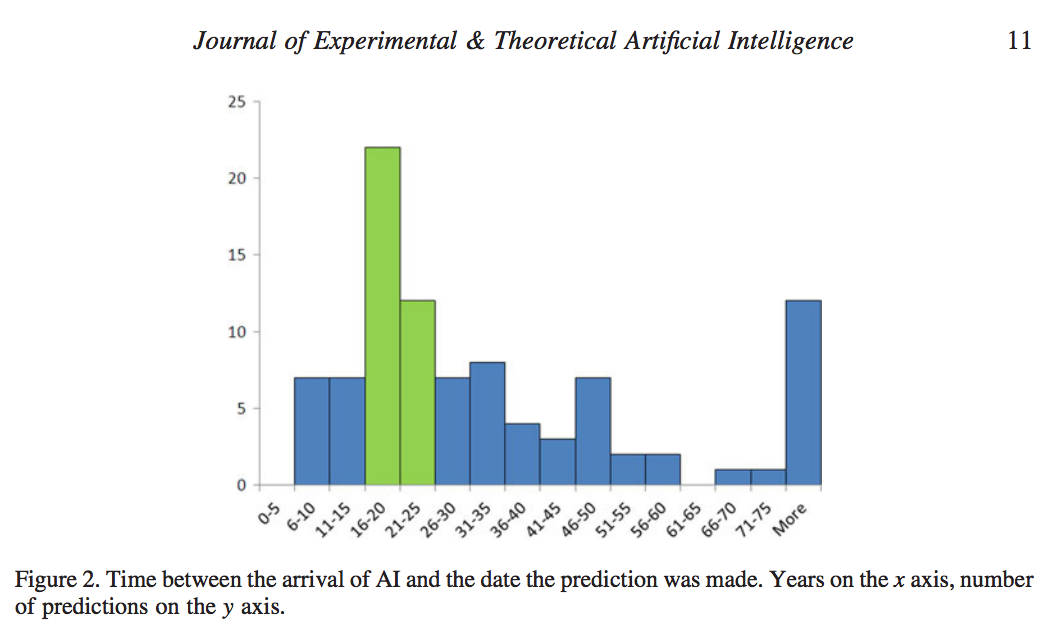

Stuart Armstrong and Kaj Sotala recently published a new paper in the Journal of Experimental & Theoretical Artificial Intelligence. Among other things, they confirm the well-known predictive adage that, “AI is always 20 years away”.

Abstract:

Predicting the development of artificial intelligence (AI) is a difficult project – but a vital one, according to some analysts. AI predictions are already abound: but are they reliable? This paper starts by proposing a decomposition schema for classifying them. Then it constructs a variety of theoretical tools for analysing, judging and improving them. These tools are demonstrated by careful analysis of five famous AI predictions: the initial Dartmouth conference, Dreyfus’s criticism of AI, Searle’s Chinese room paper, Kurzweil’s predictions in the Age of Spiritual Machines, and Omohundro’s ‘AI drives’ paper. These case studies illustrate several important principles, such as the general overconfidence of experts, the superiority of models over expert judgement and the need for greater uncertainty in all types of predictions. The general reliability of expert judgement in AI timeline predictions is shown to be poor, a result that fits in with previous studies of expert competence.